- #JUPYTER NOTEBOOK ONLINE GPU HOW TO#

- #JUPYTER NOTEBOOK ONLINE GPU INSTALL#

- #JUPYTER NOTEBOOK ONLINE GPU CODE#

- #JUPYTER NOTEBOOK ONLINE GPU DOWNLOAD#

#JUPYTER NOTEBOOK ONLINE GPU DOWNLOAD#

To run terminal commands in Colab, you will have to use “ ! ” at the beginning of the command.įor example, to download a file from some.url and save it as some.file, you can use the following command in Colab: !curl -output some.file NOTE : By default, the Colab notebook uses Python shell. You can then copy that command and execute it in your Colab notebook to download the dataset. These extensions will generate a curl/wget command as soon as you click on any download button in your browser.

#JUPYTER NOTEBOOK ONLINE GPU HOW TO#

So let’s see how to download datasets when we don’t have a direct link available for us. Training models usually isn’t that easy, and we often have to download datasets from third-party sources like Kaggle. Not bad at all, but this was an easy one. The test accuracy is around 97% for the model we trained above. The following output is expected after running the above command:Ĭlick on RESTART RUNTIME for the newly installed version to be used.Īs you can see above, we changed the Tensorflow version from ‘2.3.0’ to ‘1.5.0’.

#JUPYTER NOTEBOOK ONLINE GPU INSTALL#

To install a particular version of TensorFlow use this command: !pip3 install tensorflow= 1.5. The package manager used for installing packages is pip. To do this, you’ll need to install packages manually.

#JUPYTER NOTEBOOK ONLINE GPU CODE#

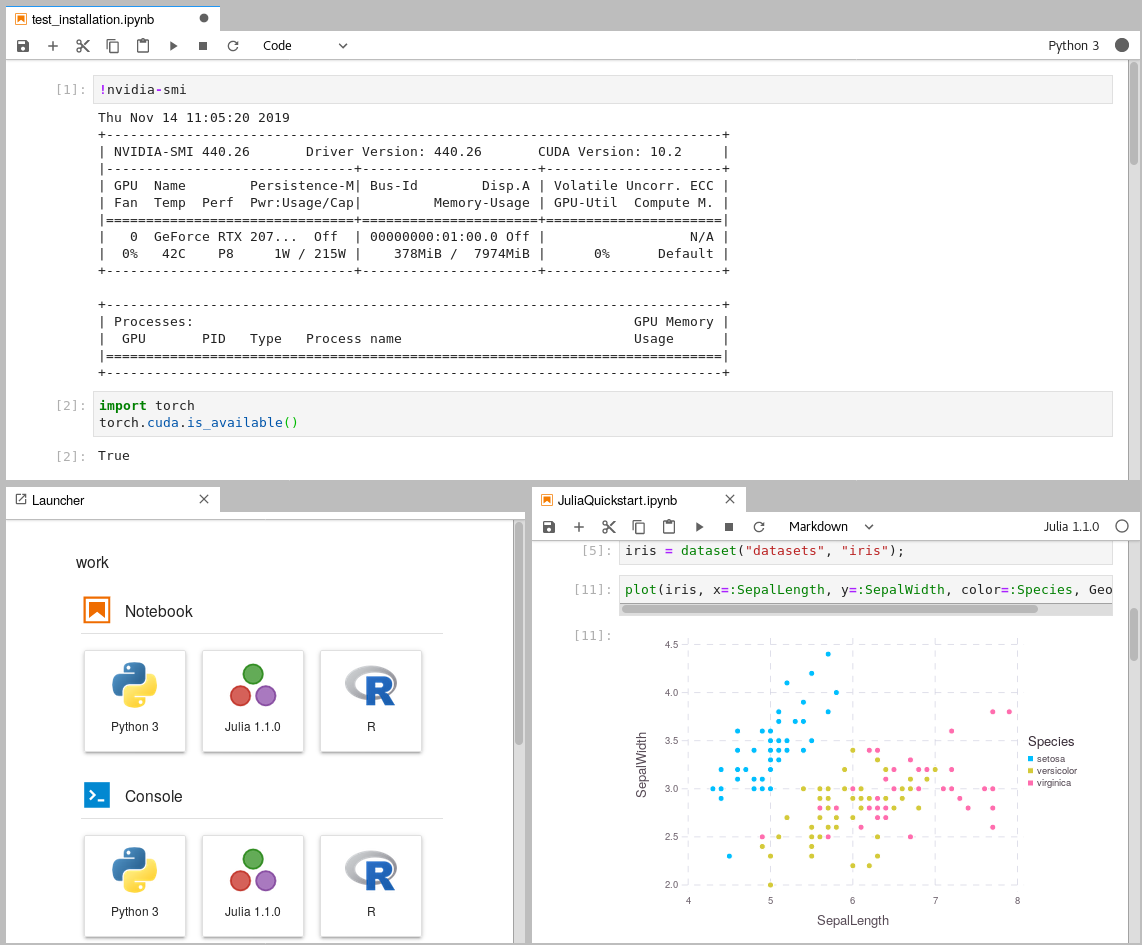

In some cases, you might need less popular libraries, or you might need to run code on a different version of a library. Most general packages needed for deep learning come pre-installed. The exclamation point tells the notebook cell to run the following command as a shell command. You can use the code cell in Colab not only to run Python code but also to run shell commands.

Probability_model = tf.keras.Sequential([

#extend the base model to predict softmax output #define optimizer,loss function and evaluation metric Next, we define the Google Colab model using Python: #define model The output for this code snippet will look like this: Downloading data from ġ1493376/ 11490434 - 0s 0us/step (x_train,y_train), (x_test,y_test) = mnist.load_data() #load training data and split into train and test sets Setup: #import necessary libraries import tensorflow as tf The model is very basic, it categorizes images as numbers and recognizes them. The data is loaded from the standard Keras dataset archive. ? The Ultimate Guide to Evaluation and Selection of Models in Machine Learningįor example, let’s look at training a basic deep learning model to recognize handwritten digits trained on the MNIST dataset. Since a Colab notebook can be accessed remotely from any machine through a browser, it’s well suited for commercial purposes as well. Google Colab supports both GPU and TPU instances, which makes it a perfect tool for deep learning and data analytics enthusiasts because of computational limitations on local machines. This is necessary because it means that you can train large scale ML and DL models even if you don’t have access to a powerful machine or a high speed internet access. Google Colab is a great platform for deep learning enthusiasts, and it can also be used to test basic machine learning models, gain experience, and develop an intuition about deep learning aspects such as hyperparameter tuning, preprocessing data, model complexity, overfitting and more.Ĭolaboratory by Google (Google Colab in short) is a Jupyter notebook based runtime environment which allows you to run code entirely on the cloud. If you’re a programmer, you want to explore deep learning, and need a platform to help you do it – this tutorial is exactly for you.